WAN 2.6 Image-Edit turns prompts into precise photo edits—adjusting color and lighting, restyling aesthetics, replacing backgrounds, removing objects, and refining details while preserving subject identity. Built for stable, repeatable image-to-image pipelines. Ready-to-use REST API, best performance, no cold starts, affordable pricing.

Idle

Your request will cost $0.035 per run.

For $1 you can run this model approximately 28 times.

One more thing:

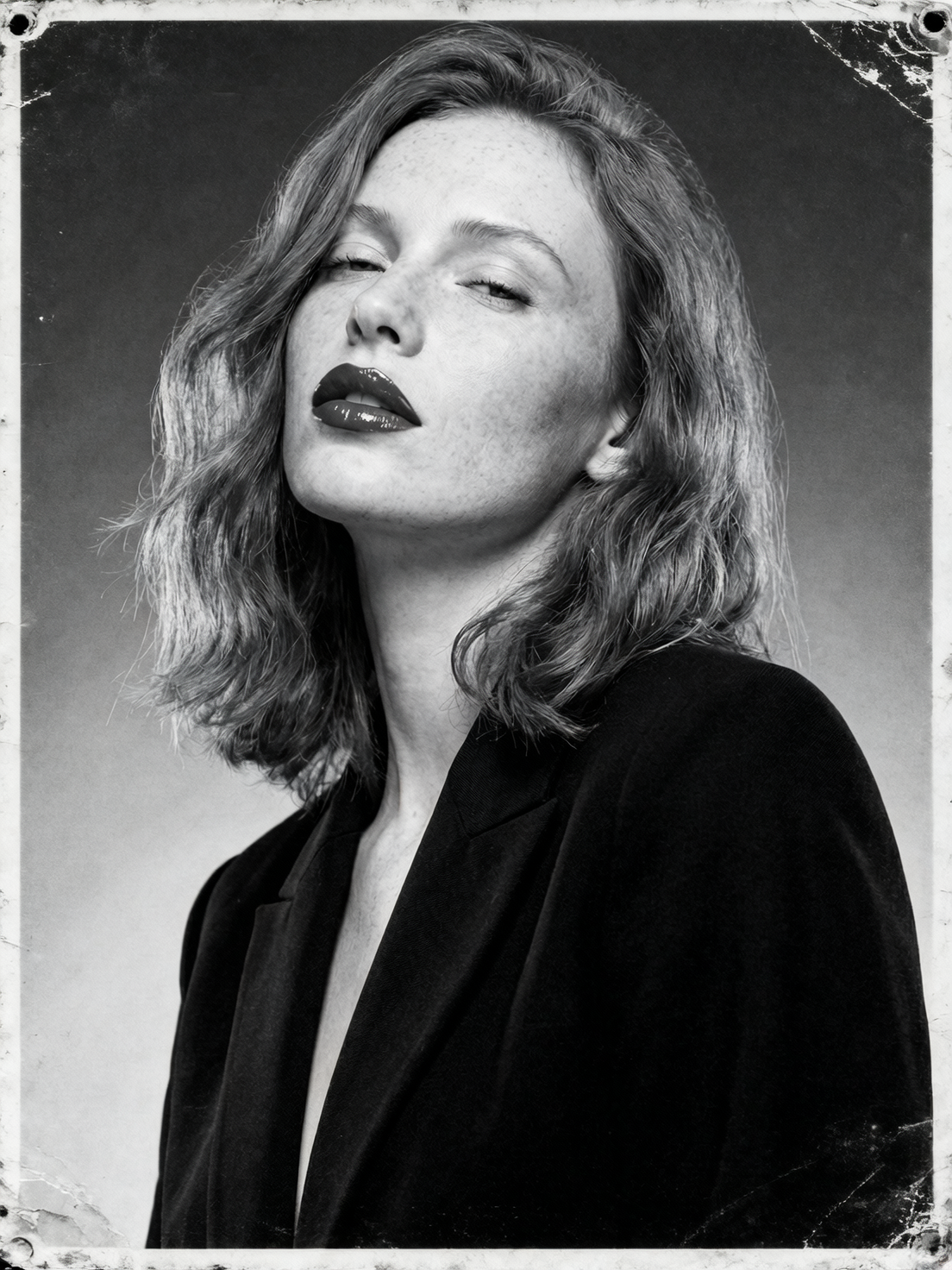

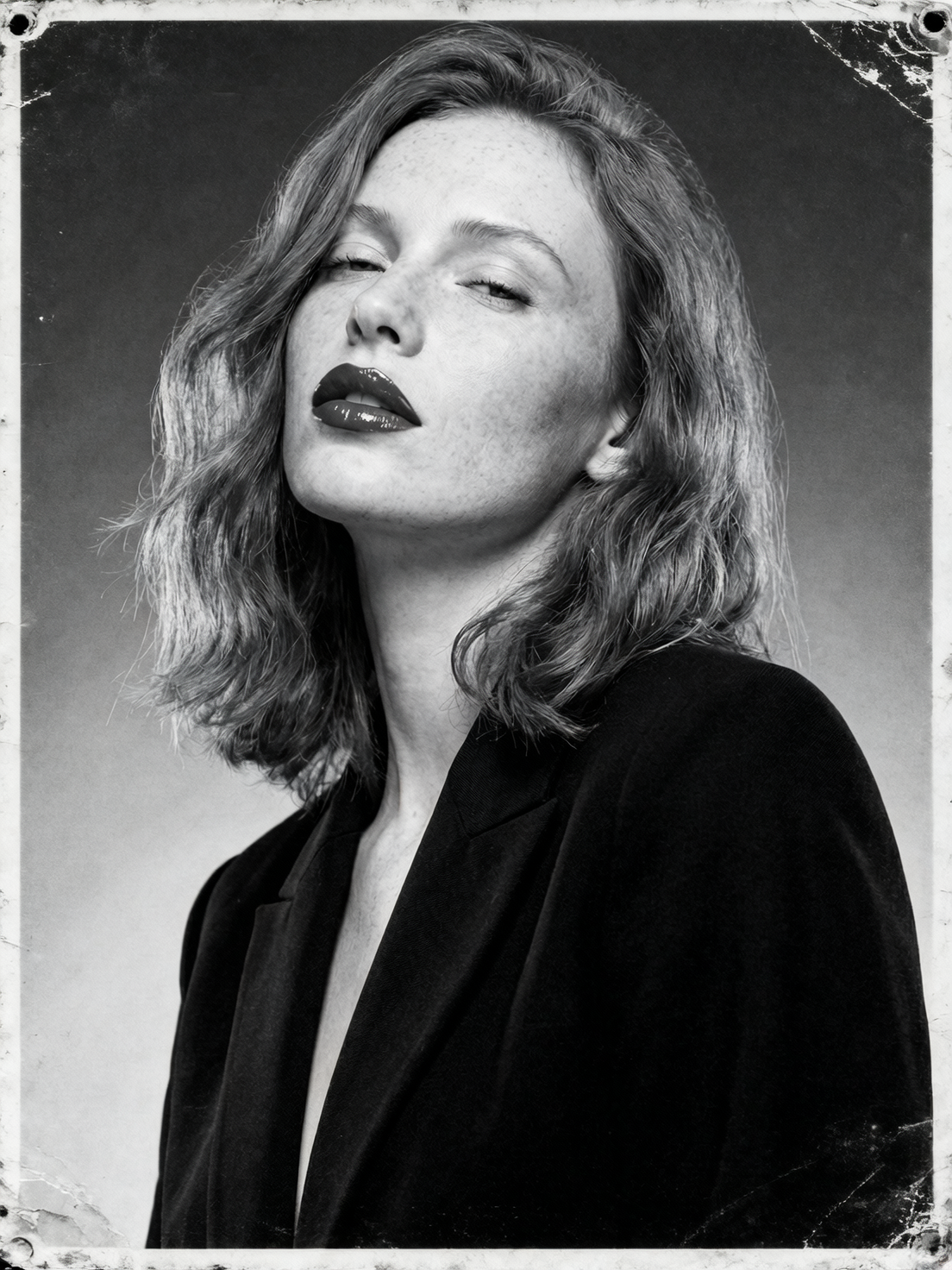

ExamplesView all

README

WAN 2.6 Image Edit

WAN 2.6 Image Edit (/wan-2.6/image-edit) is a prompt-driven image-to-image editing model for making targeted changes to an existing image. Upload one or more reference images, describe the edit in plain English, and the model returns an updated image while aiming to preserve the original structure, subject identity, and composition.

It’s a strong fit for fast creative iteration: changing clothing, colors, materials, background mood, adding/removing simple objects, and applying style adjustments without rebuilding the entire scene.

Why it stands out

- Prompt-based edits with strong intent-following for common creative workflows.

- Designed to preserve composition and key subject features while applying localized changes.

- Multi-image reference support for style/subject/background guidance (useful for fusion edits).

- Seed control for repeatable outputs and more consistent iteration.

- Negative prompting support to reduce artifacts (text, watermarks, extra fingers, etc.).

Capabilities

- Image-to-image editing from natural-language instructions

- Multi-image reference editing (1–4 inputs recommended, depending on workflow)

- Style shifts, background swaps, object addition/removal, and material/color changes

- More stable iterative refinement when using a fixed seed

Parameters

| Parameter | Description |

|---|---|

| prompt* | The edit instruction describing what to change and what to keep (e.g., “change the jacket to leather, keep face and pose unchanged”). |

| images* | One or more input images to edit (uploaded files or public URLs). |

| seed | Optional integer for reproducibility; use a fixed seed to iterate with smaller prompt changes. |

| negative_prompt | Optional list of things you don’t want (e.g., “text, watermark, extra fingers, blurry face”). |

How to use

- Upload one or more images (the main image to edit; optionally add style/background references).

- Write a clear prompt with two parts:

- What to change (the edit)

- What must stay the same (constraints) Example: “Replace the background with a rainy Tokyo street at night, keep the person’s face and pose unchanged.”

- Optional: add a negative_prompt to reduce unwanted artifacts.

- Optional: set a fixed seed to make iterations more comparable.

- Run the model, preview the output, and iterate step-by-step if needed.

Pricing

- $0.035 per run

Notes

-

If edits spill into areas you want to preserve, strengthen constraints: “keep the face unchanged”, “keep the background intact”, “do not alter the text”.

-

If outputs look inconsistent, try:

-

simplifying the prompt

-

using a fixed seed

-

iterating with smaller changes

Related Models

- WAN 2.5 Image Edit — Previous WAN image-edit model with a similar prompt-driven workflow for fast image revisions.

- Qwen Image Edit — General-purpose AI image editing with strong prompt adherence for everyday creative and product workflows.

- Qwen Image Edit Plus — Higher-quality image editing variant for cleaner results and better detail retention on complex scenes.

- Google Nano Banana Pro (Edit) — High-fidelity image editing with strong composition preservation and reliable text handling.